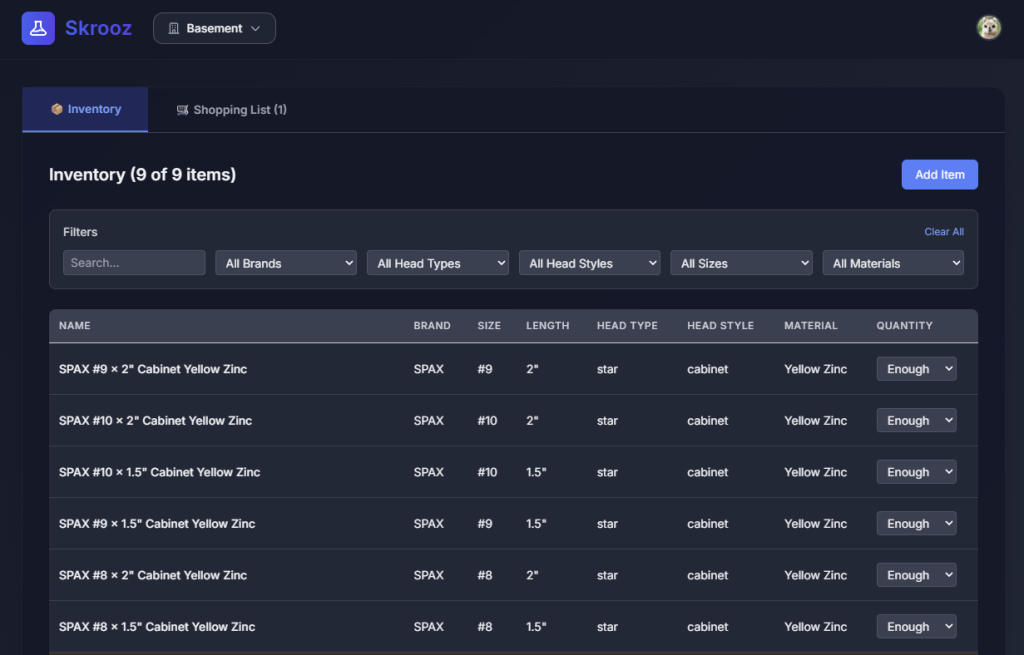

I’ve continued to work on the project I discussed in the last post, and it’s now well into the “real project” range. At the moment it’s about 150k lines of code, after 574 commits, 298 PRs, 300 issues. Many of those last two have come recently as I’ve adapted my workflow, which is what I’ll discuss in this post.

I took a few days off to work on other things, and when I came back to the project I took stock and didn’t really feel like the simple chat->code->test loop was cutting it as the system got more complicated and capable. At 150+ pages the design and architecture docs were too unwieldy for both me and Claude to reason about with scattershot ideas and feedback. In a real team this would be where you want to spread the work out so people aren’t stepping on each other’s digital toes, but this is a different shape of that problem. I could fire off random bugs and ideas and have it build things, but you either do that in series, which is slow, or in parallel, which causes lots of merge conflicts and duplicated efforts.

Themed Versions

Side Note: Experienced devs and managers will start notice a theme here, that we already know how to manage this type of stuff, it’s the same things we’ve used to manage complex projects for decades. It’s just not totally obvious up front or even in the middle how we can apply that, and where things can be or should be different.

The first thing I did was to tag what I had as 0.1.0. Then I brain dumped everything I had queued up from big ideas to small ideas, and with Claude started to cluster these into themes. Then we mapped those themes to versions in a roadmap, with practical criteria that would define the goal of each version.

Then we drilled down into the next version, addressing specific details, refining the scope, etc. Ideas kept coming from all over, but if they don’t fit in this bucket, they simply get parked on the roadmap. With a tight scope, Claude breaks it down into tasks, which it can do very well. These tasks are pretty well specified, with background, acceptance/test criteria, related tasks, and medium level implementation details (e.g. table names, but not file names).

Review Time

Things are getting tricky enough now that I don’t want to do the “commit then review” approach, I want to try reviews prior to merging. I start handing the issues off to agents and having them send PRs. I also have them review each other’s work, and while they’re mostly writing good if not excellent code, it is finding enough things that I definitely validate that the reviews are worthwhile.

So now I’ve got some agents writing, some reviewing, some addressing feedback, and others trying to merge good PRs. I didn’t expect this to work, but I had to see where it was going to fail, which it quickly did. There were endless merge conflicts, agents deleting stacked branches, conflicting details and formatting. Gemini struggled with large conflicts, it would try to fix them, and would eventually succeed, but it took forever. Codex would realize what it just stepped into and often take a more surgical approach where it would directly re-apply the changes to a clean main. Claude did OK, but much slower than Codex. Claude tends to repeat obvious mistakes so I had to add some guardrails to it’s MEMORY.md, which I haven’t yet had to do with the others.

Oops: Formatting and Linting

I quickly realized I had never set up any strict linting and formatting. I implemented this, which invalidated a dozen or so pending PRs that were in merge hell, so I just closed those out. I did a full formatting/linting pass with pretty much every option turned on, then re-did those PRs. This would be utterly demoralizing for human coders, but the bots don’t care and it all took a couple of hours to get back on track.

More Merging > Less Merging?

The formatting helped with the conflicts but the agents were still butting heads frequently. I slogged through wrapping up that version, which took a couple of nights, and then took a new approach for the next one. This time I had the issues more strictly organized using GitHub subissues. I also had started to realize that Codex was consistently doing everything way faster than the others, and at similar or possibly even higher quality for the smaller scoped tasks. Also, Codex on the $20 plan seems to get more coding work done than the Claude $125 plan, which I frequently exhaust even using mostly Sonnet for coding tasks.

I told Codex to send me a PR for all open issues, of which there were about 48. It cranked for a while and finished the job. Then I had Claude review all of the PRs, leaving feedback as comments. Then Codex addressed all of the comments. For a human coder, this would be kind of a bonkers approach, but it worked well. Finally, I had Codex merge the PRs in batches, in whatever order it deemed appropriate. After each merge it would run the quicker tests, and after each batch it ran the full tests: unit, integration, E2E, which had already passed on push so they weren’t going to be far off. Halfway through I pulled and built the app myself and tested the new stuff, then it finished. Overall this approach handled more work in a lot less time. I don’t know how big this scales, but 48 issues is a pretty good sized chunk of work in terms of planning and effort on my part so I’m not sure I need to go too far beyond that.

Phases

I tried that version for two versions and it worked well but I made a tweak on the most recent one. This version lent itself well to phases so instead of a giant batch of 40 issues it became about 6 batches of 5-8 issues/PRs. The throughput is a bit lower, but this handled drift between phases better, so feedback on the second issue that affects the 30th issue doesn’t cause headaches because it’s already merged. I think the optimal batch size can vary here depending on the focus of the version, but it feels like the best approach I’ve tried so far.

Syncing Up

I forgot to do this with a couple of versions, but figured the design had probably drifted a bit from the implementation since some of the decisions were only the roadmap or the issues. I asked Claude chat to check a few key docs to confirm this, which it did. Then I asked Claude Code (Opus) to review everything in detail, it fired up a bunch of subagents, and … immediately ate my entire 5 hour Max quota in about 20 minutes of sifting through code. It eventually finished in the next window, and did a great job, but boy is it a monster for tokens for that type of work. I tried it again on the next version and it didn’t consume the whole quota so I think it’s something to do either in chunks, or frequently.

Random Bugs, No Backlog

If I come across an actual bug while I’m using it or testing it, I’ll describe it to my planning Claude session and it will file the bug on my behalf, unless it’s a symptom of a larger change, in which case it goes on the roadmap. I do not have a backlog of issues, if anything is an issue, it gets picked up and fixed. This is an anti-pattern for human developers, but it’s a lot easier to just tell the agents to fix all issues, rather than deal with tags and milestones and versions. When we plan the next version, these changes get incorporated there.

Overall this feels like a pretty sustainable and productive method. It’s not as exciting or tiring as the initial burst, but it is very elastic. I can spend 10 minutes and kick off a chunk of work, or I can spend 3 hours and keep things moving while designing the upcoming work. This project is far from done so we’ll see how we do with a progessively larger and more complex environment.